Code

OpenL3

OpenL3 is an open-source Python library for computing deep audio and image embeddings.

CREPE Pitch Tracker

CREPE is a monophonic pitch tracker written in python based on a deep convolutional neural network operating directly on the time-domain waveform input.

Scaper

A Python library for soundscape synthesis and augmentation,

muda

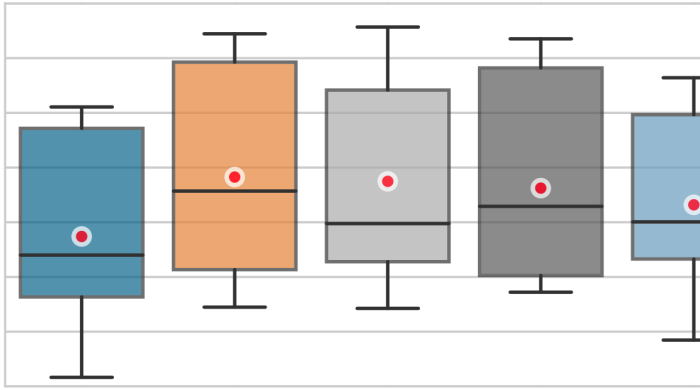

A python library for Musical Data Augmentation. The goal of this package is to make it easy for practitioners to consistently apply perturbations to annotated music data for the purpose of fitting statistical models.

jams

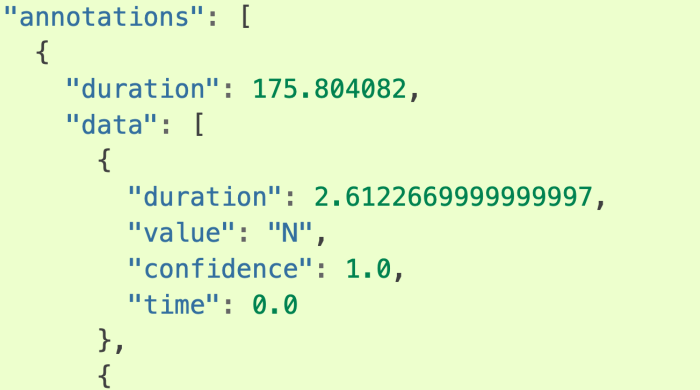

A JSON Annotated Music Specification for Reproducible MIR Research.

BirdVoxDetect

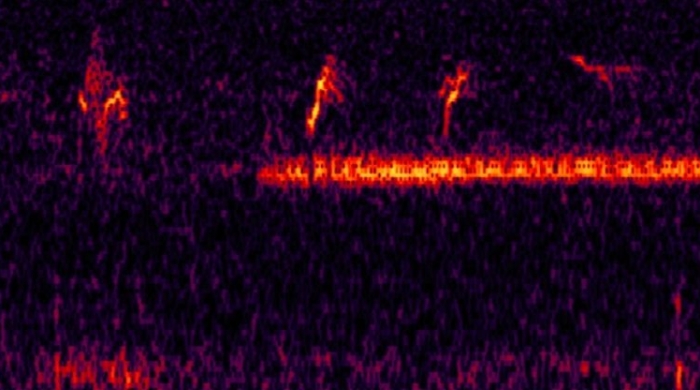

BirdVoxDetect is a pre-trained deep learning system which detects flight calls from songbirds in audio recordings, and retrieves the corresponding species.

librosa

librosa is a python package for music and audio analysis. It provides the building blocks necessary to create music information retrieval systems.

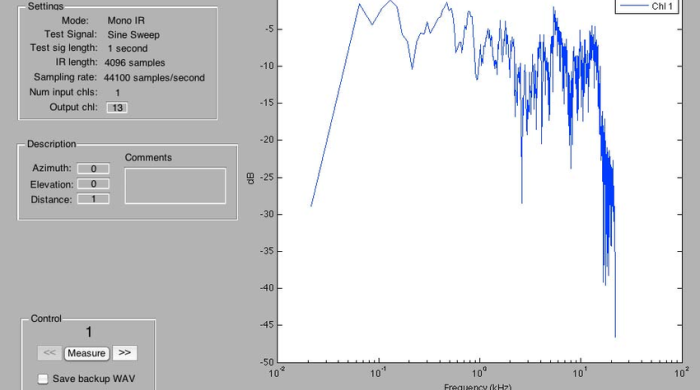

ScanIR

ScanIR is an application for flexible acoustic multichannel impulse response measurement in Matlab intended for public distribution.

Kymatio

Kymatio is an implementation of the wavelet scattering transform in the Python programming language, suitable for large-scale numerical experiments in signal processing and machine learning.

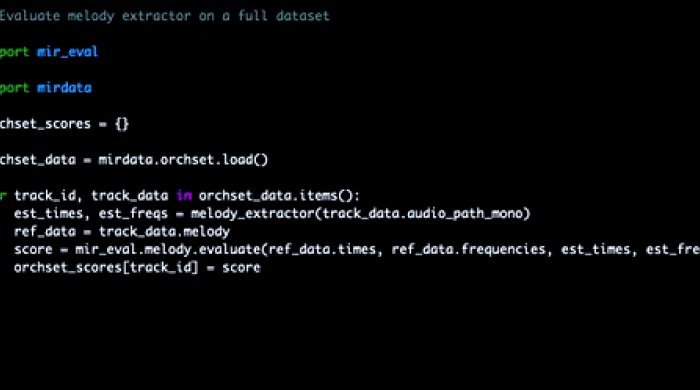

Mirdata

This library provides tools for working with common Music Information Retrieval (MIR) datasets.

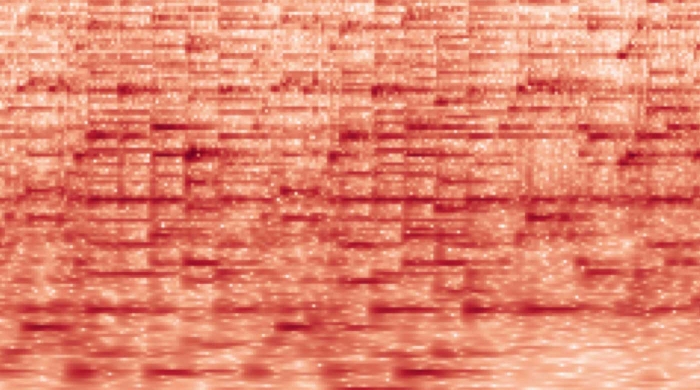

Deep Salience

Companion code for Deep Salience Representations for $F_0$ Estimation in Polyphonic Music.

Music Structure Analysis Framework

A Python framework to analyze music structure.

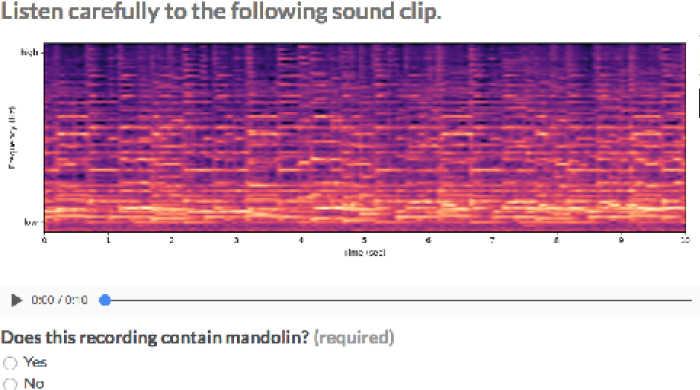

Open-MIC

This repository contains companion source code for working with the OpenMIC-2018 dataset, a collection of audio and crowd-sourced instrument labels.

Datasets

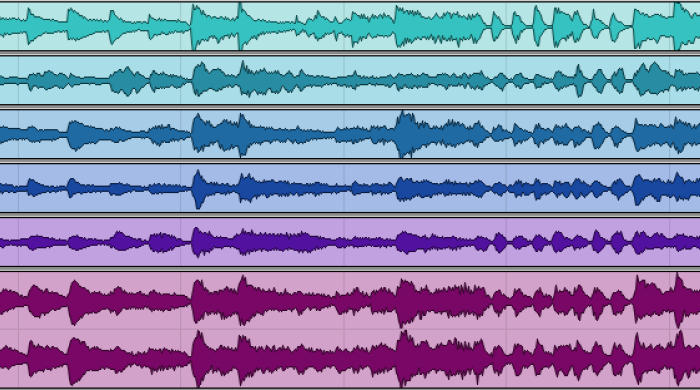

MedleyDB

MedleyDB is a dataset of annotated, royalty-free multitrack recordings for noncommercial and academic research.

Head-Related Impulse Responses Repository

This HRIR repository consist of 113 dataset from 4 publicly available HRTF databases, namely the LISTEN ,CIPIC , FIU and MIT-KEMAR

HMDiR HRTF dataset

The Head-Mounted-Display acoustic Impulse Responses dataset comprehends HRIR measurements for 1200 locations collected over a Neumann KU-100 mannequin fitted with a variety of HMDs used for virtual, augmented, or mixed reality.

CityTones Soundfield Repository

The CityTones project is a collaborative open-source repository that consists of 360 audio/visual environmental recordings for both creative and academic purposes.

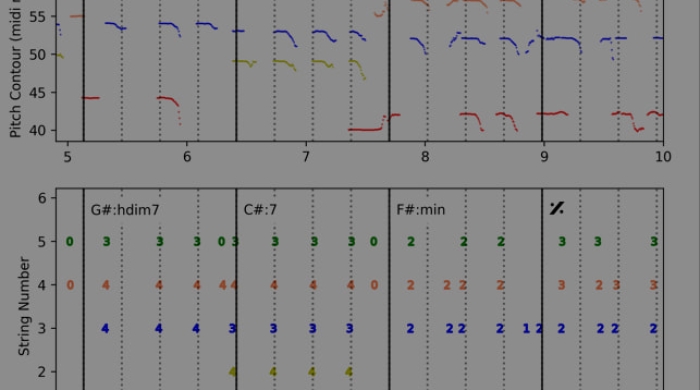

GuitarSet

A dataset for guitar transcription

BirdVox-DCASE-20k

The BirdVox-DCASE-20k dataset contains 20,000 ten-second audio recordings. These recordings come from ROBIN autonomous recording units, placed near Ithaca, NY, USA during the fall 2015.

UrbandSound8k

This dataset contains 8732 labeled sound excerpts (<=4s) of urban sounds.

URBAN-SED

A dataset of 10,000 soundscapes with sound event annotations generated using scaper.

OpenMIC-2018

The OpenMIC-2018 dataset is made available through a collaboration between Spotify and MARL@NYU.

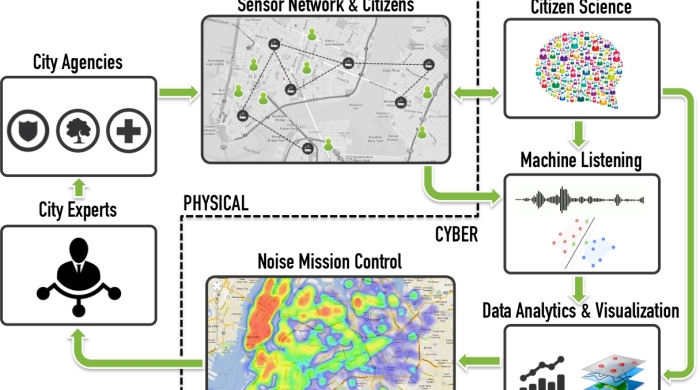

SONYC-UST

SONYC Urban Sound Tagging (SONYC-UST) is a dataset for the development and evaluation of machine listening systems for realistic urban noise monitoring.