Interdisciplinary Spencer Funded Workshop on Learning Analytics for Equity (#LA4Equity)

Event leadership: Alyssa Wise (Director, NYU-LEARN) and Fabienne Doucet (Executive Director, NYU Metro Center)

Dates: Sunday May 21st to Tuesday May 23rd, 2023

LEARN partnered with NYU Metro Center in organizing the “Innovating a New Generation of Learning Analytics for Educational Equity” (#LA4Equity), funded by the Spencer Foundation. Alyssa Wise and Fabienne Doucet led the two and half day event with an interdisciplinary group of 27 key leaders in the higher education space working on data, equity and learning analytics to connect knowledge of what is possible through data science with what is most needed to promote equitable outcomes for post-secondary students.

The group convened on May 21st-23rd, 2023 off-campus in New Jersey for a series of highly engaged discussions that bridged long-standing gaps in communication and generated an extensive list of avenues for future research.

Equity-Oriented Learning Analytics: A Cross-Community Conversation

Renzhe Yu: Columbia University

Fabienne Doucet: New York University

Alyssa Wise: New York University

Thursday, April 27, 2023

This hybrid event generated interdisciplinary dialogue around the use of analytics to advance equity that bridges perspectives from the analytics and equity communities. First, invited expert, Renzhe Yu, Assistant Professor of Learning Analytics/Educational Data Mining at Columbia University drew on his own research to discuss some key opportunities and challenges of equity-oriented educational data science. Then, Fabienne Doucet, Executive Director of NYU Metro Center and Associate Professor of Early Childhood and Urban Education shared a response from an equity-first perspective. This event was facilitated by the director of NYU-LEARN, Alyssa Wise.

Renzhe Yu's Presentation Slides Watch Recording of Equity-Oriented Educational Data Science: Opportunities and Challenges

The Power of Programming Analytics: How Knowing What Your Students Know Can Inform Your Instruction (Interactive Event)

Sharon Hsiao: Santa Clara University

Alyssa Wise: New York University

Fanjie Li: New York University

Friday, March 31, 2023

This interactive event discussed how recent developments in learning analytics provided exciting new opportunities for insight into students’ programming knowledge, strategies, and challenges by modeling their process data (e.g., code snapshots, execution log). Our invite expert Sharon Hsiao shared her perspective on Data-Driven Engineering in Programming Language Learning, following an interactive activity, designed by NYU-LEARN's doctoral scholar Fanjie Li. The event was facilitated by the director of NYU-LEARN, Alyssa Wise.

Sharon Hsiao's Presentation Slides Watch Recording of Data Driver Engineering in Programming Language Learning

Understanding Learners through Data in Computer Science Education

Matt Davidson, Apple

Michael Lee, New Jersey Institute of Technology (NJIT)

Kayla DesPortes, New York University

Thursday, November 17, 2022

Our invited experts Matt Davidson and Michael Lee shared their work using novel tools and process mining methods to provide insight into how students learn computer science. The conversation was moderated by NYU-LEARN core faculty member Kayla DesPortes.

Watch Recording of Understanding Learners through Data in Computer Science Education

Data Literacy in the 21st Century

Shiri Mund, Lifelines

Annika Wolff, LUT University

Rahul Bhargava, Northeastern University

Yoav Bergner, New York University

Thursday, October 6, 2022

This conversation explored how different ideas about data literacy show up in different educational contexts and how we can start to define and take stock of levels of data literacy to inform learning analytics use and more. In this twist on our usual Learning Analytics Conversation Series format, expert commentators Annika Wolff and Rahul Bhargava shared their perspectives on the different conceptions of data literacy presented by NYU LEARN graduate Shiri Mund. The conversation was moderated by NYU LEARN core faculty Yoav Bergner.

Shiri Mund's Presentation Slides Watch Recording of Data Literacy in the 21st Century

ACM Conference on Learning at Scale (L@S)

The ACM Conference on Learning at Scale (L@S) is an interdisciplinary and progressive event that brings together those interested in investigating large-scale, technology-mediated learning environments. L@S 2022 took place at Cornell Tech’s Roosevelt Island Campus in New York City from June 1-3, 2022 with the theme of “Blending @ Scale." LEARN Core Faculty Xavier Ochoa helped organize this year’s event as one of the Program Chairs.

LEARN @ LASI22

LASI (the Learning Analytics Summer Institute) is an educational event designed to accelerate the maturation of the discipline organized by the Society for Learning Analytics Research (SoLAR). It will take place online from June 13 to June 17, 2022. LEARN organized two workshops and one training at LASI22.

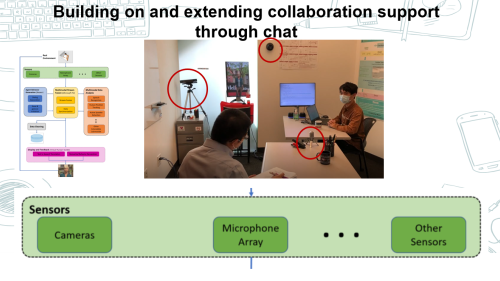

Multimodal Learning Analytics for In-Person and Remote Collaboration

Monday, June 13, 2022, Wednesday, June 15, 2022, and Friday, June 17, 2022

LEARN core faculty Xavier Ochoa instructed a workshop targeted toward researchers that have some experience with traditional Learning Analytics and want to expand their capabilities to physical learning spaces. This workshop was a gentle introduction to Multimodal Learning Analytics (MMLA): its promises, its challenges, its tools and methodologies. It included a hands-on learning experience analyzing different types of signals captured from real environments emphasizing strategies, techniques, and constructs related to collaboration analytics.

Human Centered Learning Analytics

Monday, June 13, 2022, Wednesday, June 15, 2022, and Friday, June 17, 2022

LEARN doctoral students, Juan Pablo Sarmiento and Fabio Campos organized this workshop to help anyone with or without experience in human-centered design learn how human-centered design methods can be applied to learning analytics contexts. Building from the growing interest in designing Learning Analytics (LA) systems with stakeholders, this workshop sought to build on the momentum and share insights around the contributions that Human-Centered Design theory and practice make to LA system conception, design, implementation, and evaluation.

Power to the User: Using Participatory Design to develop Learning Analytics tools

Tuesday, June 14, 2022 and Thursday, June 16, 2022

For a second year in a row, LEARN doctoral students, Juan Pablo Sarmiento and Fabio Campos trained beginners to human-centered design interested in developing Learning Analytics tools or implementating participatory processes on how to involve stakeholders in design processes to empower them and to render tools which are adjusted to their needs, routines and values. In this tutorial, they presented a number of processes, frameworks and examples for engaging in participatory design processes with students, faculty or administrators, emphasizing both opportunities and challenges. Participants worked with a current or past project to develop first steps to an outline for a participatory design process.

An Introduction to Methodologies in Learning Analytics

Tuesday, June 14, 2022 and Thursday, June 16, 2022

LEARN core faculty Yoav Bergner led a tutorial for newcomers that explored the structure of learning analytics arguments and outlined some best practices from the framing of research questions to selection of methods to collaboration on and communication of analytic results. Participants designed a research study (in 5 minutes!) using the Heilmeier catechism; explored data sets to identify types of variables, missingness, outliers, and other irregularities; discussed levels of analytics from description to prediction, explanation, and causal inference; identified research questions and methods in published LAK/JLA papers and enter results into the MLA Airtable; and learned to use R markdown notebooks (and GitHub) to produce (and collaborate on) reproducible research.

LEARN @ LAK22

LAK (the International Conference on Learning Analytics and Knowledge) is the premier academic event for the international learning analytics community and took place online this year from March 21 to March 25, 2022 with the theme of "Learning Analytics for Transition, Disruption and Social Change." LEARN presented four papers and organized two workshops at LAK22.

We are pleased to share that LEARN Director Alyssa Wise helped to organize this year’s event as one of the Program Chairs.

Unpacking Instructors’ Analytics Use: Two Distinct Profiles for Informing Teaching

March 23, 2022

Former LEARN postdoc, Quijie Li along with LEARN doctoral scholars, Yeonji Jung and Bernice d'Anjou and director, Alyssa Wise presented their paper which addresses the gap in knowledge about differences in how instructors use analytics to inform teaching by examining the ways that thirteen college instructors engaged with a set of university-provided analytics. This paper won the best short paper award.

Towards a Pragmatic and Theory-Driven Framework for Multimodal Collaboration Feedback

March 23, 2022

LEARN masters scholar Maurice Boothe Jr., faculty Xavier Ochoa and colleagues paper presentation proposed an overarching framework for automated collaboration feedback that bridges theory and tool as well as technology and pedagogy.

Read the Towards a Pragmatic and Theory Driven Framework Paper

An Exploratory Evaluation of a Collaboration Feedback Report

March 25, 2022

LEARN faculty Xavier Ochoa and colleagues presented their paper which reports an exploratory evaluation to understand the effects a collaboration feedback report through an authentic study conducted in regular classes.

Read the An Exploratory Evaluation of a Collaboration Feedback Report Paper

Participatory and Co-Design of Learning Analytics: An Initial Review of the Literature

March 23, 2022

LEARN doctoral scholar Juan Pablo Sarmiento and director Alyssa Wise paper serves as a synopsis of how Participatory Design (PD) and Co-design (Co-D) have been applied to the specific needs of Learning Analytics. They presented on their study which reviewed 90 papers that described 52 cases of PD of LA between 2010 and 2020 to address the research question “How is participatory design (PD) being used within LA?”

CROSSMMMLA & SLE Workshop

Learning Analytics for Smart Learning Environments Crossing Physical and Virtual Learning Spaces

March 21, 2022

LEARN Xavier Ochoa and colleagues organized this workshop as a forum to exchange ideas on how we as a community can use our knowledge and experiences from CrossMMLA to design new tools to analyze evidence from multimodal data and Smart Learning Enviroments (SLEs). It aimed to discuss the main issues to further research, development, and implementation with SLE and how these overlap with Learning Analytics (LA). Plus, how SLE research and practice can utilize the latest advances in LA.

Third International Workshop on Human Centred Learning Analytics (HCLA)

March 22, 2022

LEARN doctoral scholars, Juan Pablo Sarmiento and Fabio Campos, were two of the workshop organizers. This workshop aimed to address some of the questions regarding how the Learning Analytics community can appropriate design approaches from other communities and identify best practices that can be more suitable for LA developments.

Visit the Third International Workshop on HCLA Workshop Website

Using Multimodal Learning Analytics to Address the Challenges of Online Collaboration

Vanessa Echeverria, Escuela Superior Politecnica del Litoral

Mutlu Cukurova, University College London

April 19, 2022

This conversation examined how multimodal learning analytics (MMLA) can support student online collaboration. Dr. Vanessa Echeverria, the first expert, discussed "From Multimodal Data to Meaningful User Interfaces". The second expert, Dr. Mutlu Cukurova presented on "MMLA goes to Uni: The Promise and Challenges of Implementing MMLA in Real-World Settings".

Vanessa Echeverria Presentation Slides Mutlu Cukurova's Presentation Slides

Bigger than Just A Course: Using Analytics to See and Improve Educational Degrees - A Conversation

Leah Macfadyen, University of British Columbia

Isabel Hilliger, Pontificia Universidad Católica de Chile

Andrew Brackett, New York University

February 22, 2022

This conversation examined how learning analytics can support the "big picture" of improving educational degrees. Dr. Leah Macfadyen, the first expert, discussed "Using learning analytics to support curriculum review & learning (re)design". The second expert, Dr. Isabel Hilliger spoke about the "Design of curriculum analytics tools to support continuous improvement processes in higher education programs". Dr. Andrew Brackett, Assistant Director of Learning Analytics in NYU IT's Teaching and Learning with Technology unit, moderated the conversation.

Leah Macfadyen's Presentation Slides Isabel Hilliger Presentation Slides

Watch Recording of Bigger than Just A Course: Using Analytics to See and Improve Educational Degrees

The Power of Learning Analytic Tools for Large Introductory Classes: A Conversation

Abelardo Pardo, University of South Australia

Timothy McKay, University of Michigan

Lucy Appert, New York University

November 2, 2021

Our second Learning Analytics Conversation Series of the semester explored different strategies and challenges for enriching large introductory courses with learning analytic tools. The first expert, Dr. Abelardo Pardo, discussed "Rule-Based Support Systems to Promote Student Engagement". The second expert, Dr. Timothy McKay, discussed "Personalizing Large Courses Using Data: ECoach and More". Dr. Lucy Appert, Director of Educational Technology for NYU's Arts & Science division, moderated the discussion.

Timothy McKay's Presentation Slides Abelardo Pardo's Presentation Slides

Watch Recording of the power of learning analytic tools for large introductory classes

Upping Your Advising Game with Learning Analytics: A Conversation

Tinne De Laet, Katholieke Universiteit Leuven

Kyle Jones, Indiana University-Indianapolis

Emily Schlam, New York University

October 19, 2021

This Learning Analytics Conversation Series engaged with two different perspectives on how we can responsibly use learning analytics to support academic advising within higher education. The first expert, Dr. Tinne De Laet, discussed "AI for Academic Advising, a Matter of Trust?". The second expert, Dr. Kyle Jones, discussed "Advising, Nudging, or Coercing? Ethics of Advising Analytics". Dr. Emily Schlam, Senior Director at Student Success at NYU, moderated the discussion.

Tinne De Laet's Presentation Slides Kyle Jones Presentation Slides

Watch Recording of Upping Your Advising Game with Learning Analytics

LEARN @ LASI21

LASI (the Learning Analytics Summer Institute) is an educational event designed to accelerate the maturation of the discipline organized by the Society for Learning Analytics Research (SoLAR). It took place online from June 21, 2021 to June 25, 2021. LEARN organized three tutorials at LASI21.

Power to the User: Using Participatory Design to Develop Learning Analytics Tools

Tuesday, June 22, 2021 and Thursday, June 24, 2021

LEARN doctoral students, Juan Pablo Sarmiento and Fabio Campos led a tutorial at the LASI on using participatory design to develop learning analytics tools. They presented a number of processes, frameworks and examples for engaging in participatory design processes with students, faculty or administrators, emphasizing both opportunities and challenges. In the tutorial, participants worked with a current or past project to develop first steps to an outline for a participatory design process.

Writing and Publishing a Rigorous Learning Analytics Article

Tuesday, June 22, 2021 and Thursday, June 24, 2021

LEARN’s Alyssa Wise and Xavier Ochoa led a tutorial with Simon Knight from University of Technology Sydney on developing rigorous learning analytics manuscripts for publication in topic tier publications. The interactive session focused on developing rigorous learning analytics manuscripts for publication in topic tier publications (including but not limited to the Journal of Learning Analytics). Topics encompassed the full breadth of quality criteria from issues of technical reporting to situating contributions in the literature and knowledge base of the field. Common pitfalls and ways to address them were discussed. While targeted at manuscript development, the session was of use to reviewers as well.

An Introduction to Methodologies in Learning Analytics

Tuesday, June 22, 2021 and Thursday, June 24, 2021

LEARN’s Yoav Bergner led a tutorial that explored the structure of learning analytics arguments and outlined some best practices from the framing of research questions to selection of methods to collaboration on and communication of analytic results.

LEARN @ LAK21

LAK (the International Conference on Learning Analytics and Knowledge) is the premier academic event for the international learning analytics community and took place online this year from April 12 to April 16, 2021. LEARN presented two papers at LAK21.

Subversive Learning Analytics

Wednesday, April 14, 2021

LEARN doctoral student Juan Pablo Sarmiento, masters student Maurice Boothe Jr., and director, Alyssa Wise presented their work at LAK'21 conceptualizing Subversive Learning Analytics (SLA). SLA arises from the premise that data should be used as a transformative force for change, rather than to perpetuate an often-problematic status quo, and uses a critical stance to reveal and challenge the power structures and inequities in education. This paper won the best short paper award.

Presentation Slides for Subversive Learning Analytics Read the paper

Beyond First Encounters with Analytics

Questions, Techniques and Challenges in Instructors’ Sensemaking

Wednesday, April 14, 2021

LEARN postdoctoral researcher Qiujie Li, doctoral student Yeonji Jung and director, Alyssa Wise presented their work at the LAK'21 investigating the different questions instructors ask of learning analytics, the technique they use to answer them and the challenges they face working in this new paradigm of data-informed pedagogical decision making.

Presentation Slides for Beyond First Encounters with Analytics Read the paper

Fairness & Equity in Learning Analytics: A Conversation

Paul Prinsloo, University of South Africa

Ravi Shroff, New York University

March 30, 2021

This second event in our new Learning Analytics Conversation Series examined two different perspectives on how we can productively navigate the intersection of learning analytics and equity and consider the role of educational data science as a tool for social justice. This format had each expert provide a brief overview of their work in the area, followed by a moderated dialogue about commonalities, differences and emergent high-level principles. The first expert, Dr. Paul Prinsloo, spoke about "Reconciling Learning Analytics and Social Justice: Contemplating the (Im)possible". The second expert, Dr. Ravi Shroff presented focused on "Towards Transparent Interpretable Models: Simple Rules to Guide Expert Classifications".

Paul Prinsloo's Presentation Slides Ravi Shroff's Presentation Slides

Scaling Up Learning Analytics: A Conversation

Maren Scheffel, Ruhr University Bochum

John Whitmer, Federation of American Scientists

March 16, 2021

This inaugural event in our new Learning Analytics Conversation Series explored different strategies and challenges for scaling up learning analytics in university, commercial and public sector contexts. This format had each expert provide a brief overview of their work in the area, followed by a moderated dialogue about commonalities, differences and emergent high level principles. The first expert, Dr. Maren Scheffel, discussed "Creating Higher Education Institutional Policies to Sustainably Establish and Improve Learning Analytics Adoption: Why Involving All Stakeholders is Key". The second expert, Dr. John Whitmer discussed "Integrating Research & Production Learning Analytics: Tips Learned from Commercial & Public Sector Experiences".

John Whitmer's Presentation Slides Maren Scheffel's Presentation Slides

6 Techniques for Participatory Design of Learning Analytics

Juan Pablo Sarmiento, New York University

Feburary 10, 2020

This workshop led by NYU-LEARN doctoral scholar, Juan Pablo Sarmiento and offered through the Learning Analytics Learning Network (LALN) guided participants through the six concrete techniques and methods they can leverage to create participatory workshops with educators and students. Participants developed the start of a plan to incorporate members of their learning community into their next learning analytics design project.

Workshop Slides of Juan Pablo Sarmiento's workshop Video Recording of Juan Pablo Sarmiento's workshop

The Next Frontier of AI-Enabled Collaborative Learning

Carolyn Rosé, Carnegie Mellon University

November 18, 2020

Dr. Carolyn Rosé joined NYU-LEARN for a cutting-edge talk on how language technologies can support student teamwork and on-the-job workplace learning. Using Online Mob Programming (OMP), Dr. Rosé and her team aim to encourage project teams in the classroom to reflect on concepts and share work in the midst of their project experience with the goal of bringing dynamic collaboration support into physical spaces through Virtual Humans.

Presentation Slides of Carolyn Rose's talk Video Recording of Carolyn Rose's talk

What Data Would Make You Change (Your) Course?

October 29, 2020

An interactive workshop for NYU Faculty cohosted with NYU Teaching and Learning with Technology. This workshop focused on practical approaches to improving teaching and course design using data. Instructors shared their experiences teaching their classes this (unusual) year, and learned some easy methods of using data to shape a potential improvement into a real plan.

Workshop Slides for What Data Would Make You Change (Your) Course?

Multimodal Data Stories to Support Reflection in Clinical Simulations

Roberto Martinez-Maldonado, Monash University

October 14, 2020

Dr. Roberto Martinez-Maldonado joined NYU-LEARN for an innovative talk on the use of sensing technologies in clinical simulations. Using co-designed Data Stories and Multimodal Learning Analytics (MMLA) Dr. Martinez-Maldonado and his team aim to provide automated feedback to healthcare students and facilitators that can inform the clinical debrief and help identify areas that need further development.

Presentation Slides of Roberto Martinez-Maldonado's talk Video recording of Roberto Martinez-Maldonado's talk

College in the Time of Corona

Spring 2020 Student Survey Results & Community Discussion

Alyssa Wise & Yoav Bergner, New York University

September 30, 2020

The Learning Analytics Research Network (LEARN) hosted a timely conversation that explored how students across the US experienced the rapid transition to remote instruction in Spring 2020. Drs. Alyssa Wise and Yoav Bergner shared the results from LEARN’s recent IRB-approved, 15 question student survey. Over 250 students from various universities responded to the survey between late March and early May 2020, offering unique insight into the experience of this transition as it occurred. They also lead a discussion of their implications for our teaching and learning practices moving forward.

Presentation Slides of College in the Time of Corona event Video Recording of College in the Time of Corona event

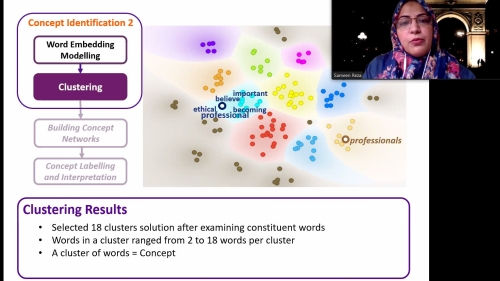

Tracing Professional Identity Development through (Mixed-Methods) Data Mining

Sameen Reza, New York University

June 18, 2020

As part of ICLS (the International Conference of the Learning Sciences) 2020, we invited members of both the Learning Sciences and Learning Analytics communities for a unique conversation exploring the promise and potential peril of data-mining methods for generating insight into complex human learning processes. The session led by LEARN postdoctoral scholar, Sameen Reza, was built around the accepted ICLS paper “Tracing Professional Identity Development through Mixed-Methods Data Mining of Student Reflections”, with an invited commentary by Professor David Shaffer followed by an open community discussion.

Presentation Slides on Tracing Professional Identity Development through (Mixed-Methods) Data Mining Video Recording of Tracing Professional Identity Development through (Mixed-Methods) Data Mining presentation

Learning Analytics: Facing Up to Ethical Challenges

Rebecca Ferguson, The Open University

March 3, 2020

An insightful talk into the ways in which The Open University, UK is working to build a culture of responsible learning analytics use. Dr. Rebecca Ferguson described six key ethical challenges and how they are currently being tackled at The Open University, UK.

Debating Data Dilemmas

A Workshop by NYU-LEARN

February 18, 2020

How do we balance insight into learning with protecting student privacy? What are the implications of using analytics to designate certain students as “at-risk”? Should I be able to compare my data to other students’? In this workshop, the NYU-LEARN team facilitated discussion and debate of real world dilemmas that have arisen for the use of analytic data to support learning. Talking in small groups, participants identified the different principles and tensions at play from different perspectives. Through the event, participants developed a deeper understanding of the complex balances at play in learning analytics work and how to support capacity-building efforts to address them at New York University.

Data Enhanced Learning and the Quantified Student

Marcus Specht, Delft University of Technology

November 21, 2019

Social and educational sciences have been collecting data about the effectiveness and the efficiency of educational practices for some time. Especially in the last years more data driven approaches have been developed in the field of Learning Analytics to create dashboards and tools for monitoring educational activities. Reflection and personalised feedback are some of the most efficient means for enhancing human learning. Without feedback and making our progress visible and tangible we are lost in our way. Data tracking in digital systems and sensor-based wearable computing enable data-informed learning support for reflection and personalisation. In this talk, Dr. Specht shared the work he and his team are doing to develop data-enhanced learning and human-centred learning analytics at the Leiden-Delft-Erasmus Center for Education and Learning.

Developing 21st-Century Skills with Multimodal Analytics Interactive Demos and Discussion

Xavier Ochoa, New York University

November 12, 2019

An interactive experience and exploration of how Multimodal Learning Analytics (MmLA) can improve learning and teaching processes. Xavier Ochoa, one of LEARN’s core faculty and Assistant Professor of Learning Analytics, offered hands-on demonstrations of two cutting-edge systems for collecting real-time data in physical spaces to automatically generate feedback for communication and collaboration skills. Demos were followed by a discussion of the ways these tools can be applied to support students and instructors.

SoLAR Webinar: Designing Learning Analytics for Humans with Humans

Alyssa Wise, New York University

October 16, 2019

To be effective learning analytics tools must not only be technically robust but also designed to support use by real people. In this webinar, Alyssa Wise presented a diverse set of examples of the ways that NYU-LEARN is including educators and students in the process of building and implementing learning analytics. She shared examples of how to: involve students in the creation and revision of learning analytics solutions for their own use; work with instructors to align analytically available metrics with valued course pedagogy; and partner with an educational team to design and implement interventions based on at-risk students predictions. Watch this webinar to gain a sense of both the conceptual issues and practical concerns involved in designing learning analytics for humans with humans.

Presentation Slides from Designing Learning Analytics for Humans with Humans Watch SoLAR Webinar on Designing Learning Analytics for Humans with Humans

Panel: Data Analytics and Student Success

Xavier Ochoa, New York University

October 15, 2019

“Big Data” analytics have permeated many aspects of our lives, for good and ill. This discussion centered around how educational institutions leverage the power of data analytics to improve student learning, persistence, and graduation. Panelists also discussed how to gain these benefits without violating privacy, marginalizing teachers, or introducing systematic bias that may harm some students.

Learning Analytics for Lectures, Design and Informal Learning: Innovations from Japan

October 3, 2019

A series of flash talks from our visitors Drs. Hiroaki Ogata, Atsushi Shimada and Masanori Yamada from Kyoto University in which they describe their work exploring the possibilities for learning analytics in face-to-face lectures, informal learning environments, and to inform course design.

- Connecting Formal & Informal Learning through Learning Evidence & Analytics, Hiroaki Ogata

- Toward Learning Analytics for Reconsideration of Instructional Design, Masanori Yamada

- Advanced Learning Analytics for Face-to-face Lecture Support, Atsushi Shimada

Aligning Learning Analytics with Classroom Practices & Needs

Simon Knight, University of Technology Sydney

October 1, 2019

How do we make use of data about our students to support their learning while ensuring that learning analytics developers and educators to align with educator practice and needs? The University of Technology Sydney has taken a participatory design based approach to designing and implementing learning analytics in practice, and understanding their impact. Their work has identified existing practices with which learning analytics may be aligned to augment them. In this talk, Dr. Knight introduced some of these projects, particularly drawing on their work in developing analytics to support student writing (writing analytics), giving examples of how analytics were aligned with existing pedagogic practices to support learning. Through this augmentation, supported by design-based approaches, Dr. Knight argues they can develop research and practice in tandem.

Presentation Slides from Aligning Learning Analytics with Classroom Practices & Needs

Advanced Analytics in Medical Education

Martin Pusic, NYU Langone Health

April 16, 2019

New educational technologies enable innovative health professions approaches with the potential to promote patient safety, better focus on learner needs, and enhance learning efficiency and effectiveness. In essence, the goal is to help learners climb the learning curve to expert clinical performance faster without sacrificing long-term retention. Dr. Pusic described a novel training approach that uses hundreds of Cognitive Simulations of radiology and ECG cases. This system not only provides an immense volume of simulated cases, but also allows strategic selection of cases to optimize learning according to the learner’s current skill state. Using this approach, learners can achieve in hours or days a level of experience and performance that would normally require years to accumulate. The vision is to have learners practice on simulated cases in the same way a violinist would practice their scales: practicing to mastery before attempting the performance that matters (i.e., in a real-life setting). Emerging evidence suggests important differences in the number, type, and sequence of cases, and the manner in which feedback is provided. Spoiler alert: Training to expertise probably requires far more practice than is currently done.

Presentation Slides from Advanced Analytics in Medical Education

From Attendance to Analytics: Traditional Strategies & Emerging Approaches to Data-Informed Teaching

A panel hosted in collaboration with FAS Office of Educational Technology.

March 26, 2019

Instructors routinely adjust their courses based on diverse forms of data, from attendance records to quiz grades to student annotations on readings. Faculty panelists explored a variety of ways faculty do and could use data to inform their teaching by sharing their experiences and methods. Experts in learning research discussed ways to enhance these practices with services such as the NYU Learning Analytics Dashboard.

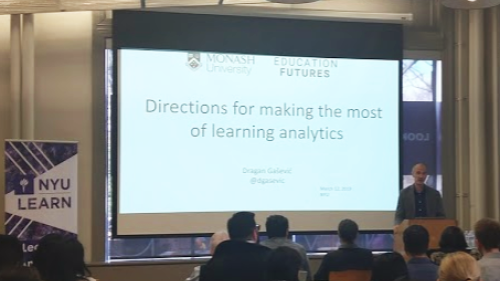

Directions for Making the Most of Learning Analytics

Dragan Gašević, Monash University

March 12, 2019

The analysis of data collected from user interactions with technology has attracted much attention as a promising approach to enhancing the human learning process. This growing interest led to the formation of the field of learning analytics. The field has now entered the next phase of maturation with a growing community who has an evident impact on research, practice, policy, and decision-making. This talk first provided a brief overview of recent developments in the field and then explore two key challenges for learning analytics that require immediate attention: i) validity of data collection and analysis and ii) user interaction with results of analytics to inform action. The talk shared promising directions for addressing the two challenges by considering learning analytics as an interdisciplinary interplay between data science, theories of human learning, and design.

Automated Writing Evaluation Feedback to Support Learning

Jill Burstein, Educational Testing Service

February 12, 2019

Automated writing evaluation (AWE) uses natural language processing (NLP) methods to detect and extract linguistic features relevant to writing quality (for example use of sources, claims, and evidence; topic development; coherence; and grammatical conventions). The talk described the inner-workings of AWE systems – specifically, what these systems can do now. This was illustrated with a demo of Writing MentorTM (WM) - a Google Docs add-on that provides students with AWE-based feedback to help them improve their writing in a principled manner - and the presentation of results from qualitative usability evaluations with users in the wild.

Presentation Slides from Automated Writing Evaluation Feedback to Support Learning

Learning from Every Student: Leveraging Analytics to Support Student Success In Higher Education

Stephanie Teasley, University of Michigan

November 27, 2018

The research community for Learning Analytics is growing rapidly promising new insights into learning and resulting innovations in pedagogy. For this promise to be realized, however, we need the capacity to leverage educational data for scholarly research, and apply research results at the kind of scale that truly changes how we teach and learn. In this talk Dr. Teasley presented how the University of Michigan is engaged in learning analytics as an institutional initiative aimed at leveraging the data produced by digitally-mediated educational tools to better understand and improve student outcomes. Dr. Teasley shared two examples of the way the University of Michigan is using learning analytics to support student success. In the first she described how analytics help us understand the ways curricular pathways impact students. In the second she described our efforts to understand how student-facing dashboards can be designed to support important meta-cognitive skills.

LEARN Lounge at NYU Technology Summit

November 14, 2018

Gesture Detection System & Dynamic Network Wall Demo

Yoav Bergner and Xavier Ochoa

Data-Driven Decision-Making & Learning Design Workshop

Alyssa Wise, Ben Maddox and Yoav Bergner

How exactly can learning analytics inform teaching and course design? Using concrete examples, participants explored the different kinds of insight innovative educational data analysis techniques offer and considered their specific application to our their courses.

Opposites Attract: Analytics and the Humanities Seminar

Robert Squillace and Andrew Brackett

The session explores analytics use from the instructor’s perspective, focusing on a partnership to develop a faculty-facing analytics tool for a seminar that emphasizes project-based learning. Visual examples illustrated how the learning analytics service supported instructional decision-making and showcase recent enhancements to the data visualizations.

More Than Lightning in a Bottle: (How) Will Learning Analytics Transform Teaching & Learning?

Alyssa Wise, Martin Pusic and Xavier Ochoa

Increased data availability and new analysis methods offer exciting opportunities to look inside learning processes in ways never before possible. But (how) will this transform university teaching and learning?

Quantitative Ethnography: Turning Big Data into Real Understanding

David Williamson Shaffer, UW-Madison

October 30, 2018

In the age of Big Data, we have more information than ever about what students are doing and how they are thinking. However, the sheer volume of data available can overwhelm traditional qualitative and quantitative research methods, leading to research that finds significance without meaning. The science of quantitative ethnography connects the study of culture with statistical tools to understand learning, taking a critical step in the new field of learning analytics: going beyond looking for patterns in mountains of data to tell textured stories at scale.

Interactive Workshop on Quantitative Ethnography: Open Source Tools for Analyzing Large Sets of Discourse Data

David Williamson Shaffer, UW-Madison

October 29, 2018

This workshop introduced participants to Quantitative Ethnography, a set of tools for modeling complex and collaborative thinking. A central premise of Quantitative Ethnography is learning is a process of enculturation in which students learn to make relevant connections among the skills, concepts, and/or practices in a domain. Quantitative Ethnography models the structure of these connections in large- and small-scale datasets, and logfiles of many kinds, including transcripts of structured and semi-structured interviews or video data, games and simulations, chat, email, and social media. By modeling patterns of connections in discourse, Quantitative Ethnography helps researchers quantify and visualize the development of complex and collaborative thinking.

This interactive workshop provided an overview of Quantitative Ethnography, with an emphasis on the conceptual and practical issues of data management, coding, and modeling, and open-source tools to address these issues: nCoder, a tool for generating and validating qualitative codes; and ENA, a tool for modelling, visualizing, and testing connections in data.

Learning Analytics in Physical Spaces: Capturing & Analyzing Multimodal Learning Traces

Xavier Ochoa, New York University

October 2, 2018

The goal of Learning Analytics is to understand and improve learning. However, learning does not always occur mediated by a computational system. It also happens in face-to-face, hands-on, unbounded and analog learning settings such as classrooms and labs. The sub-field of Multimodal Learning Analytics (MMLA) emphasizes the analysis of natural rich modalities of communication and expression during learning activities such as students’ actions, speech, writing, and nonverbal interaction (e.g. gestures). This talk explored several techniques to capture and analyze multimodal learning traces, giving examples of how to use these multimodal analyses to understand the learning process in physical contexts and provide feedback to its participants. The talk concluded with a discussion of opportunities that Learning Analytics opens for non-digital learning.

Reflective Writing Analytics

Andrew Gibson, University of Technology Sydney

March 8, 2017

Reflective Writing Analytics (RWA) brings together two worlds: the human world of reflection, and the computational world of analysis. RWA holds potential to discover latent themes, temporal patterns, and features related to the well-being of the writer. However, RWA is complicated by explanatory divergence between its psychosocial and computational dimensions, making it difficult to compute analytics that are considered meaningful to the people that use it.